Flux and Llama 3 Now Run Across EdgeCloud’s Community Network

2

0

One of the persistent challenges with decentralized GPU networks is hardware diversity. Unlike a centralized data center where every machine runs identical specs, a community-driven network like Theta EdgeCloud spans thousands of nodes with different GPU models, varying amounts of vRAM, and different system configurations.

That variety is a strength when it comes to scale and geographic reach, but it means the software has to be smart enough to work across a wide range of hardware.

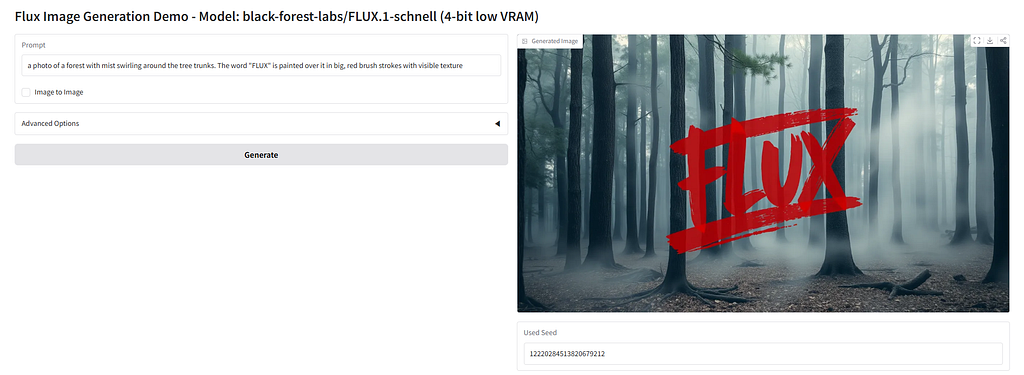

The Theta engineering team has been working on exactly that problem. We’ve now adapted several popular AI models, including Black Forest Labs’ Flux text-to-image model and Meta’s Llama 3 8B large language model, to run on community edge nodes with significantly lower vRAM requirements. The screenshot above shows the low-vRAM version of Flux running on an RTX 3090 with 24GB of vRAM on a community node, using 4-bit quantization.

What changed

The core work involved reducing the memory footprint of these models so they can run on consumer-grade GPUs that wouldn’t normally have enough vRAM for full-precision inference.

For image generation models like Flux, the team applied quantization and other memory-saving optimizations to bring vRAM usage down to levels that consumer GPUs can handle. For LLMs like Llama 3 8B, the approach focused on reducing and tuning context length along with related runtime parameters to fit within tighter vRAM budgets. Other workloads received similar treatment, with model-specific inference and output parameters adjusted for low-vRAM operation.

Beyond individual model optimizations, the team also made several container images more adaptive for EdgeCloud’s heterogeneous community-node environment. These images now detect available GPU, vRAM, and system RAM at startup and automatically select suitable settings, including quantization level and runtime parameters, to improve compatibility across a wider range of hardware. Rather than requiring node operators to manually configure their setup, the system figures out what the hardware can handle and adjusts accordingly.

Low-vRAM optimization enables a larger number of on-demand AI inference tasks to be efficiently processed by community nodes, even on consumer-grade GPUs with limited vRAM. As a result, node operators benefit from increased participation in the network and receive greater TFUEL rewards, creating stronger incentives for community engagement and contribution.

Why this matters for EdgeCloud

Theta EdgeCloud’s network of over 30,000 community-run edge nodes represents a large pool of distributed GPU compute. But that compute is only useful if the software running on it can actually take advantage of the available hardware. Many community nodes run consumer GPUs like the NVIDIA RTX 3090 or 4090, which are capable machines but don’t carry the 40GB or 80GB of vRAM found on data center cards like the A100 or H200.

By bringing the vRAM requirements down and making container images hardware-aware, a much larger portion of the edge network can now participate in running popular AI workloads. That means more available capacity for inference tasks, better utilization of the existing node base, and a more practical path to serving real AI workloads across the distributed network.

This is the kind of under-the-hood engineering work that doesn’t always make headlines but directly improves what EdgeCloud can deliver. It also aligns with a broader industry trend where techniques like quantization are making large AI models accessible on less expensive hardware, something that benefits any platform built on distributed consumer GPUs.

The optimized model templates are available now on Theta EdgeCloud. Find out more at https://thetaedgecloud.com

Flux and Llama 3 Now Run Across EdgeCloud’s Community Network was originally published in Theta Network on Medium, where people are continuing the conversation by highlighting and responding to this story.

2

0

Beheer al jouw cryptovaluta, NFT en DeFi vanaf één plek

Beheer al jouw cryptovaluta, NFT en DeFi vanaf één plekVerbind de portfolio die je gebruikt veilig om te beginnen.