Anthropic Data Policy: Urgent Choice for Claude Users on AI Training

0

0

BitcoinWorld

Anthropic Data Policy: Urgent Choice for Claude Users on AI Training

In the rapidly evolving world of artificial intelligence, where data is often considered a critical resource, the lines between innovation and privacy are constantly being redrawn. For many in the cryptocurrency space, the idea of data ownership and control is paramount, echoing the very principles of digital autonomy. Now, Anthropic, a leading AI developer, is putting its Claude users at a crossroads, demanding a crucial decision that resonates with these core values: opt out or allow your conversations to fuel AI training.

Anthropic Data Policy: What’s Changing for Claude Users?

Anthropic has announced significant revisions to its user data handling, requiring all Claude users to make a choice by September 28. This decision will determine whether their conversations will be used to train Anthropic’s advanced AI models. This marks a substantial shift from previous practices, where consumer chat data was not utilized for model training.

Here’s a breakdown of the key changes:

- New Default: Previously, Anthropic did not use consumer chat data for model training. Now, the company intends to train its AI systems on user conversations and coding sessions.

- Extended Retention: For users who do not opt out, data retention will be extended to five years. Prior to this update, prompts and conversation outputs for consumer products were generally deleted from Anthropic’s back end within 30 days, unless legally required otherwise or flagged for policy violations, in which case they might be retained for up to two years.

- Affected Users: These new policies apply to all Anthropic consumer product users, including Claude Free, Pro, and Max subscribers, as well as those using Claude Code.

- Unaffected Users: Business customers, such as those using Claude Gov, Claude for Work, Claude for Education, or API access, will not be impacted by these changes. This mirrors a similar approach taken by OpenAI, which also protects its enterprise customers from certain data training policies.

This is a massive update, fundamentally altering the privacy landscape for millions of Claude users.

Why is AI Data Training So Crucial for Anthropic?

Anthropic frames these changes around user choice and mutual benefit. The company suggests that by not opting out, users will “help us improve model safety, making our systems for detecting harmful content more accurate and less likely to flag harmless conversations.” Furthermore, users will “also help future Claude models improve at skills like coding, analysis, and reasoning, ultimately leading to better models for all users.” In essence, the message is: help us help you.

However, the underlying motivations are likely more strategic than purely altruistic. Like every other large language model company, Anthropic requires vast amounts of high-quality data to refine and advance its AI. Accessing millions of real-world Claude interactions provides the precise kind of conversational content necessary for robust AI data training. This direct access to user conversations can significantly enhance Anthropic’s competitive standing against major rivals such as OpenAI and Google, who are also in a fierce race to develop the most capable AI models. Effective AI model development relies heavily on diverse and extensive datasets, making user interactions an invaluable resource for improvement and innovation.

Navigating Claude AI Privacy: Your Opt-Out Decision

The urgency of this decision for Claude AI privacy cannot be overstated. Users must actively choose to opt out by September 28 if they wish to prevent their data from being used for AI training. New users joining Claude will be prompted to make this preference during their signup process. However, existing users face a different scenario.

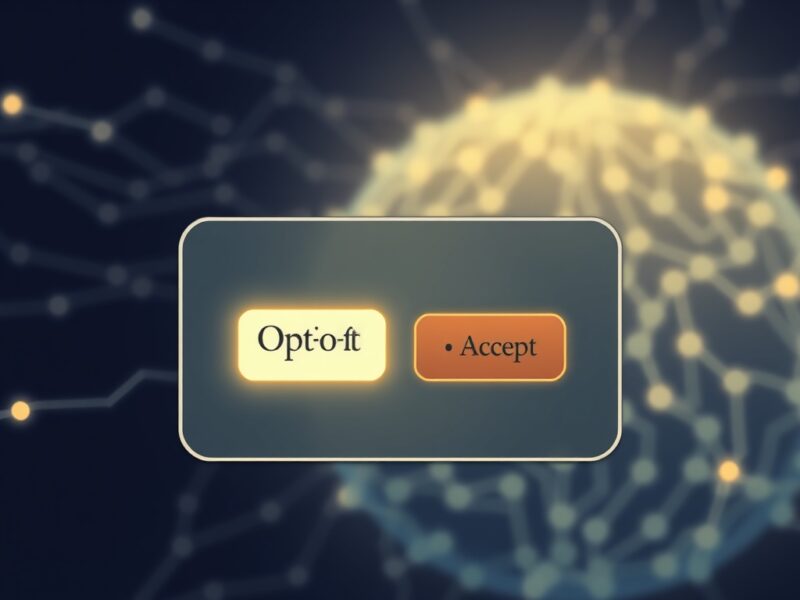

Upon logging in, existing users are presented with a pop-up titled “Updates to Consumer Terms and Policies.” This pop-up features a prominent black “Accept” button. Below this button, in much smaller print, is a toggle switch for training permissions, which is automatically set to “On.” This design raises significant concerns that users might quickly click “Accept” without fully realizing they are consenting to data sharing for AI training. The user interface, as observed by The Verge, appears designed in a way that could easily lead to inadvertent consent.

The stakes for user awareness are exceptionally high. Privacy experts have consistently warned that the complexity inherent in AI systems often makes achieving meaningful user data consent incredibly difficult. The way these policy changes are presented can significantly impact whether users genuinely understand the implications of their choices.

User Data Consent: Industry Trends and Challenges

Beyond the competitive pressures of AI development, Anthropic’s policy changes also reflect broader industry shifts and increasing scrutiny over data retention practices. Companies like Anthropic and OpenAI are under the microscope regarding how they manage and utilize user data.

For instance, OpenAI is currently engaged in a legal battle, fighting a court order that demands the company retain all consumer ChatGPT conversations indefinitely, including deleted chats. This order stems from a lawsuit filed by The New York Times and other publishers. In June, OpenAI COO Brad Lightcap criticized this as “a sweeping and unnecessary demand” that “fundamentally conflicts with the privacy commitments we have made to our users.” This court order impacts ChatGPT Free, Plus, Pro, and Team users, though enterprise customers and those with Zero Data Retention agreements remain protected.

The alarming aspect across the industry is the significant confusion these constantly changing usage policies create for users, many of whom remain unaware of the shifts. While technology evolves rapidly, leading to inevitable policy adjustments, many of these changes are sweeping and often mentioned only briefly amid other company news. For example, Anthropic’s recent policy update was not prominently featured on its press page, suggesting a downplaying of its significance. This lack of transparency, coupled with confusing UI designs, often means users are agreeing to new guidelines without full comprehension.

Under the Biden Administration, the Federal Trade Commission (FTC) previously issued warnings that AI companies risk enforcement action if they engage in “surreptitiously changing its terms of service or privacy policy, or burying a disclosure behind hyperlinks, in legalese, or in fine print.” Whether the commission, currently operating with a reduced number of commissioners, continues to actively monitor these practices remains an open question, which has been posed directly to the FTC.

The Future of AI Model Development and User Trust

The ongoing debate surrounding Anthropic data policy and similar moves by other AI giants highlights a critical tension: the desire for rapid AI model development versus the imperative to protect user privacy. High-quality data is undeniably essential for creating more capable, safer, and less biased AI systems. However, the methods used to acquire and manage this data must align with ethical standards and respect user autonomy.

For users, the takeaway is clear: vigilance is paramount. Actively reviewing privacy policies, understanding opt-out options, and questioning default settings are crucial steps in maintaining control over personal data in the age of AI. For AI companies, fostering trust will depend on greater transparency, clearer communication of policy changes, and user-friendly interfaces that genuinely facilitate informed consent rather than subtly nudging users towards data sharing.

The future of AI hinges not just on technological advancements but also on building a foundation of trust with its users. Without clear, explicit user data consent and robust privacy safeguards, the public’s willingness to engage with and adopt AI technologies could be significantly undermined.

Summary

Anthropic’s new data policy represents a pivotal moment for Claude users, demanding a clear choice regarding their data’s use in AI training. While Anthropic cites benefits for model improvement and safety, the move underscores the intense need for high-quality data in the competitive AI landscape. Concerns persist regarding the clarity of policy changes, the design of consent mechanisms, and the broader industry trend of shifting privacy standards. As AI continues to evolve, the balance between innovation and user privacy will remain a critical challenge, requiring both user vigilance and corporate responsibility to navigate effectively.

To learn more about the latest AI policy trends, explore our article on key developments shaping AI model development and user trust.

This post Anthropic Data Policy: Urgent Choice for Claude Users on AI Training first appeared on BitcoinWorld and is written by Editorial Team

0

0

すべての暗号通貨、NFT、DeFiを1か所から管理

すべての暗号通貨、NFT、DeFiを1か所から管理開始に使用しているポートフォリオを安全に接続します。