The Robodog that exposed India’s AI education crisis

0

0

The internet was in splits, but the laughter carried an edge.

On February 17, 2026, at Bharat Mandapam, India’s marquee convention venue, a Galgotias University professor told a DD News camera that her university had built “Orion,” a sleek four-legged robodog, as part of a ₹350-crore AI Centre of Excellence.

The claim didn’t survive the afternoon.

Chinese media and tech observers quickly alleged the machine was Unitree’s Go2, a robot sold online, and the showcase spiralled from publicity to embarrassment.

Power to the stall was reportedly cut, the university was ushered out, an apology followed, and a probe was announced.

For a country ranked third in Stanford’s 2025 Global AI Vibrancy tool, the spectacle was not just a PR stumble; it was a stress test.

When the memes faded, one question remained: what kind of AI ecosystem produces a demo that can’t stand up to a search bar?

The report card nobody wanted

India’s AI narrative today is backed by serious validation.

Stanford’s Global AI Vibrancy tool for 2025 placed India third, a jump that Indian media read as proof that the country is rising across multiple AI indicators.

That ranking matters because it reflects breadth: talent activity, research signals, and ecosystem scale, not just one flashy product launch.

But AI is one of those fields where scale can hide shallowness.

A country can have a lot of AI users as people who can deploy off-the-shelf tools, and still struggle to produce AI builders: researchers and engineers who create new methods, publish durable results, and generate defensible IP.

This is where India’s story runs into the fine print.

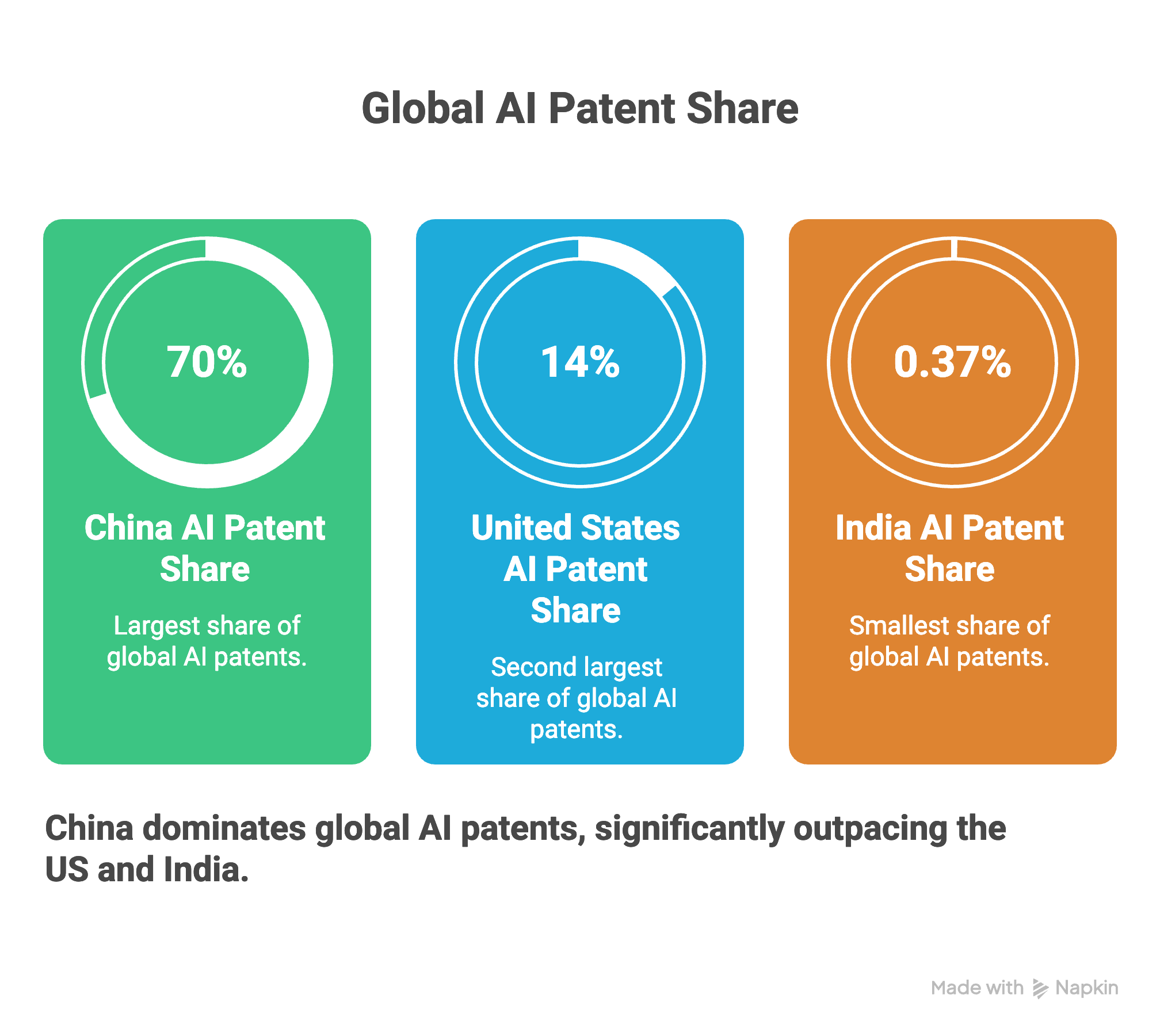

Several compilations and policy-facing summaries have highlighted how small India’s slice of global AI patents remains compared to the two largest AI powers.

Stanford’s AI Index Report 2025 places India at about 0.37% of global AI patents, versus China at about 70% and the US at about 14%.

Patents are an imperfect measure: many are low quality, some breakthroughs go unpatented, and open-source is real.

But as proxies for who owns foundational technology go, they are the best available, and India's slice is vanishingly small.

If India’s patent share is that small, the implication is not that India lacks talent. It’s that the ecosystem has been better at training people to use technology than to create it at scale.

That is why the Robodog episode landed so sharply. Buying a robot and building a robot are not the same task.

The first is procurement. The second is research, manufacturing, systems engineering, testing, and iteration, work that needs labs, budgets, and experienced mentors.

When a system consistently underinvests in the second, it starts rewarding the appearance of innovation over the practice of it.

The funding floor beneath “innovation”

Behind most “fake innovation” stories is a real scarcity story.

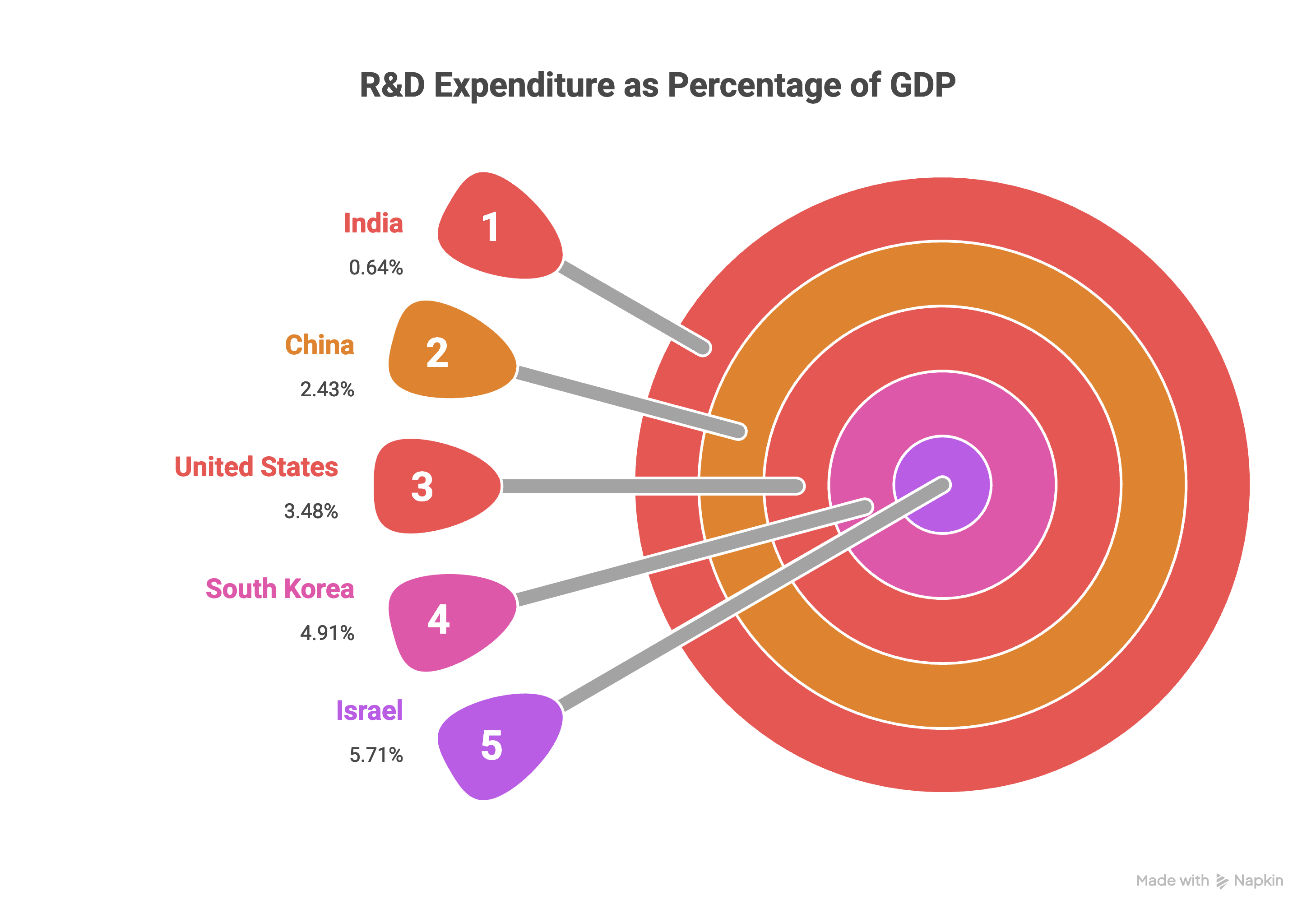

India’s expenditure on research and development is about 0.6% of GDP, as cited in reporting based on the Economic Survey 2025–26.

The same Economic Survey-linked coverage points to a second constraint: India’s business sector contributes only about 41% of total R&D spend.

Those numbers shape everything downstream. When private capital does not meaningfully fund university research, the system leans heavily on government budgets and tuition revenue.

That tends to produce predictable behaviour: universities optimise for what is cheap to show and easy to count: new centres, new MoUs, new “AI” degree programmes, and conference papers, rather than what is expensive to build, like compute access, high-quality datasets, hardware labs, and sustained research supervision.

Peer-economy comparisons make India’s position feel even more personal.

Economic Survey-based reporting has contrasted India’s 0.6% R&D intensity with much higher levels in the US, China, and South Korea.

The contrast gets starker as we take a closer look at India’s business-sector share with far higher private participation in those economies.

In other words, India is trying to win a deep-tech race with a shallow R&D pool, and with companies that are not yet shouldering the bulk of research risk.

This is where accountability gets distorted. When resources are thin and metrics are noisy, institutions chase signals.

They rename imported drones as “indigenous platforms.” They treat vendor partnerships as “research achievements.”

They file low-grade patents to inflate innovation dashboards. They push faculty toward quantity-based publication targets.

None of these behaviours is defensible. But they are understandable in a system that rewards outputs that look like progress, even if the underlying capability is missing.

Where the pipeline breaks: Classrooms, mentors, and compute

If you want to know why the “Orion” moment is plausible, you have to step away from the expo hall and walk into the average engineering classroom.

Invezz spoke with Prof. Naveen Garg, Head of the Department of Computer Science and Engineering at IIT Delhi, who frames the breakage as a fundamentals-and-mentorship issue.

India needs, he says, “a larger number of graduates with strong fundamentals in math and computer science” and “a larger pool of people who can provide the mentorship for quality AI research.”

He is equally blunt about incentives:

“The government does not seem to be seriously providing the incentives needed to make us a top research-led country in the AI domain. The big players like China and the US have actually invested a huge amount of resources in creating quality researchers. That has not happened yet in the country,” he said.

That “mentor pool” problem is not academic nitpicking. It decides whether students learn recipes or reasoning.

Modern AI work demands statistical thinking, optimisation, and solid systems knowledge.

It also demands judgment, how to evaluate a model, how to test it under real-world conditions, how to detect errors, and how to communicate uncertainty responsibly.

Then there is the infrastructure constraint, which quietly shapes what students can do.

Hardeep, a Senior AI Engineer and IIIT Prayagraj alumnus, credits his degree with giving him “strong theoretical foundations in Machine Learning (ML), Natural Language Processing (NLP) and algorithms.”

But he adds a crucial detail while speaking with Invezz: “Transformers and modern LLMs weren’t covered back then, and hands-on project work was limited due to infrastructure costs.”

For non-specialist readers: “Transformers” are a model architecture that powers many modern AI systems, including large language models (LLMs) that generate text.

Training and testing them at a meaningful scale often requires expensive compute (GPUs) and careful engineering, resources that many colleges do not have.

Hardeep’s conclusion is the line that should worry policymakers the most:

“Today, building real AI products is far more accessible; success depends largely on individual curiosity and self-learning rather than just university training.”

Self-learning is not a flaw; it is a virtue in tech.

The problem is when self-learning becomes a substitute for institutional capability.

When universities consistently outsource the hardest parts of training to YouTube, open courses, and personal laptops, they still collect the reputation benefits, but students pay the real price in time, uncertainty, and uneven outcomes.

This is why compute access has become the new dividing line. India’s policy push has started recognising that constraint.

Reports around the IndiaAI Mission have pointed to large-scale GPU deployment, about 18,000 GPUs already deployed under the mission.

That matters because shared compute can lower the barrier for researchers and startups who otherwise cannot run serious experiments.

But GPU procurement is not the same as research capacity.

Compute without mentors produces churn, people running experiments without strong guidance on evaluation, ethics, and reproducibility.

Mentors without compute produce frustration: students who understand the theory but can’t do meaningful hands-on work.

A credible AI education strategy needs both, across more than a thin layer of elite campuses.

The credibility problem: A research culture you can’t unplug

A robodog can be unplugged. A credibility crisis cannot.

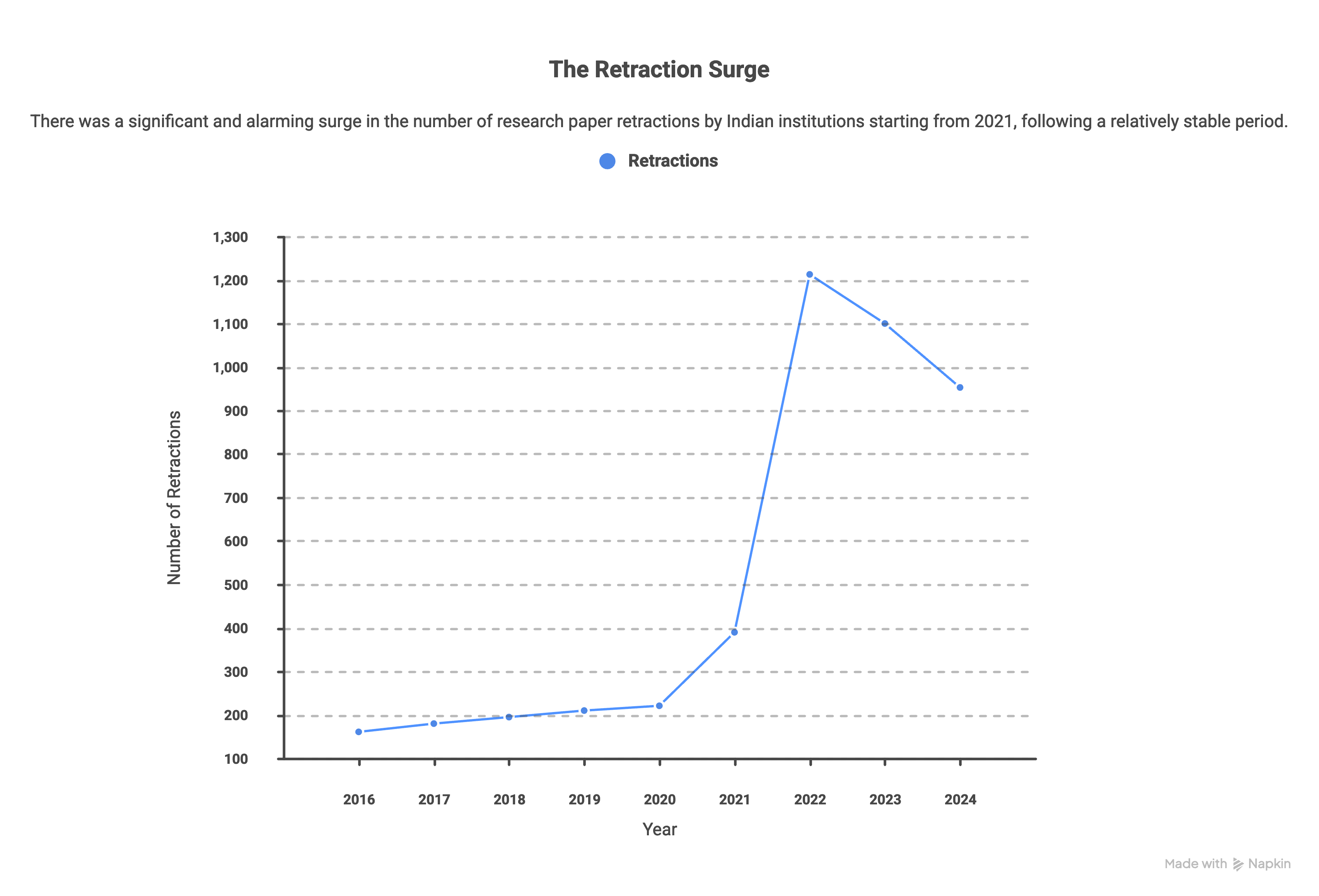

India’s publishing ecosystem has faced mounting scrutiny for research integrity, including the role of “paper mills” and manipulated peer review in some cases.

A September 2025 peer-reviewed study published in the Journal of Data Science, Computing and Information Sciences used Retraction Watch data and examined 2,853 retracted papers by Indian scholars from 2010 to 2024.

The study found that retractions surged after 2021, with 57.55% occurring between 2021 and 2024.

The same analysis lists the leading cited reasons that included fake peer review (1,007 papers), plagiarism (880), and data manipulation/falsification (746).

For readers unfamiliar with “fake peer review”: peer review is supposed to be independent expert checking before publication.

When it is faked or compromised, unreliable work can enter the scientific record.

Retractions are the system’s emergency brake, but they also reveal the cost of weak incentives and weak enforcement.

Why does this matter to AI? Because AI progress depends on trustworthy research.

If the research pipeline is noisy, papers that can’t be reproduced, results that are overstated, datasets that are questionable, industry adoption slows and global credibility takes a hit.

The country ends up spending more time chasing metrics than building durable capability.

The robodog episode, in that sense, is the visual cousin of a deeper pattern: performance over proof.

When institutions are rewarded for being seen at the frontier rather than for doing frontier work, they will predictably invest in optics.

That is also why the policy response can’t stop at punishment. It must change incentives: what rankings value, what accreditation audits examine, and what funding is tied to.

If the system keeps paying for volume, it will keep getting volume, sometimes honest, sometimes not.

Builders vs users: The talent leak

India’s AI debate increasingly splits into two camps: the optimists who argue India is becoming a builder, and the sceptics who say India is still mostly a user at scale.

Prof. M Jagadesh Kumar, former UGC Chairman and Chair of the NEP 2020 Review Committee, makes the optimistic case with conviction:

"Today, Indian institutions are not just users of AI. They are rapidly becoming builders of AI. Indian institutions are increasingly collaborating with industry to develop AI solutions in education, health, governance, agriculture, and smart cities. I can give you some examples," Prof. Kumar told Invezz.

"The Ministry of Education has supported AI Centres of Excellence, such as TANUH at IISc. This centre works on scalable AI solutions for healthcare (especially non-communicable diseases). IIT Madras Global Research Foundation announced an Applied AI Innovation Centre to accelerate applied AI. The centre connects research with responsible real-world use," he added.

He futher emphasized on the the IndiaAI Mission, which is promoting AI innovation, access, and India-centric solutions in collaboration with Indian educational institutes.

"These examples show that many Indian universities are working with AI that is inclusive and deployable."

With reference to the Galgotias incident, Prof. Kumar is measured without naming any institution:

"If any institute oversteps its claims, the mitigating measures should be proportionate, educational, and corrective. Institutions should also train their teams in ethics, research integrity, and responsible communication. This approach is the surest way to protect the reputation of India's genuine innovators," he said.

"It also prevents any possible inflated claims from creating a false narrative. But there is no doubt in my mind that India’s higher education institutes are rapidly building AI capability and creating inclusive solutions," he added.

Still, the sceptic’s point is hard to ignore: elite outliers do not define the median. A handful of top institutes can genuinely build and publish.

But India’s higher education system is vast and uneven. If most campuses cannot offer real compute access, credible mentorship, and an integrity-first research culture, “builder” status remains concentrated at the top.

Classrooms over ceremonies

And even when India produces strong AI talent, the country struggles to retain it.

Coverage summarising Stanford AI Index-linked talent metrics has highlighted India’s net AI talent migration score at -1.55, signalling a net outflow on that measure.

That matters because brain drain is not just a headline.

It is a compounding loss of mentors, founders, and research leaders, exactly the people needed to strengthen the domestic pipeline.

In financial terms, India is investing in talent formation but not capturing enough of the long-term returns.

And when returns leak out, institutions feel even more pressure to perform for rankings and PR, because the deeper outcome, a stable research ecosystem, remains hard to show quickly.

The Unitree Go2 (whether it was that model or not) is ultimately a prop in a bigger story.

India is not short on ambition. It is not short on smart students. It is short on the kind of education-and-research architecture that turns ambition into owned technology.

The robodog was not the scandal. The system that made it plausible was.

The post The Robodog that exposed India’s AI education crisis appeared first on Invezz

0

0

Manage all your crypto, NFT and DeFi from one place

Manage all your crypto, NFT and DeFi from one placeSecurely connect the portfolio you’re using to start.